AI, like most disruptive technology, has generated plenty of risk/reward discussion. In the cybersecurity world, there have been plenty of these discussions too. The most commonly discussed risks I see are:

1) The cybersec risks to the AI model itself. For example data leakage risks where the model presents information to the end user that it shouldn’t.

2) The way Large Language Models (LLMs), in particular, decrease barriers to entry to write code. This pootentially allows malicious actors to write their tools more easily and efficiently.

A lot of the discussed risks are forward-looking, based on what AI will be capable of in the future. What I don’t see is much discussion around the risks in the here and now – specifically how LLMs increase the risk of successful phishing and social engineering attacks. Two of the most costly and damaging cyberattacks businesses currently experience are ransomware and business email compromise (BEC).

Ransomware payments topped $1.1B globally in 2024 (per Chainalysis) – and that’s even before the costs of data leakage and remediation are considered. Still, the direct payments to ransomware actors are small compared to the costs of BEC attacks. Between October 2013 and December 2022, direct reports to the FBI IC3 show that nearly $51B was lost to BEC globally – or about $5.67B per year. And that’s only the reported figures, since many international businesses wouldn’t consider reporting BEC losses to IC3.

IBM notes in their 2024 Threat Intelligence Index that the top initial attack vectors for attackers – irrespective of attack type – are phishing, and valid account compromise and use. Phishing attacks are typically delivered via email, whilst valid accounts are often compromised via social engineering – again executed via email.

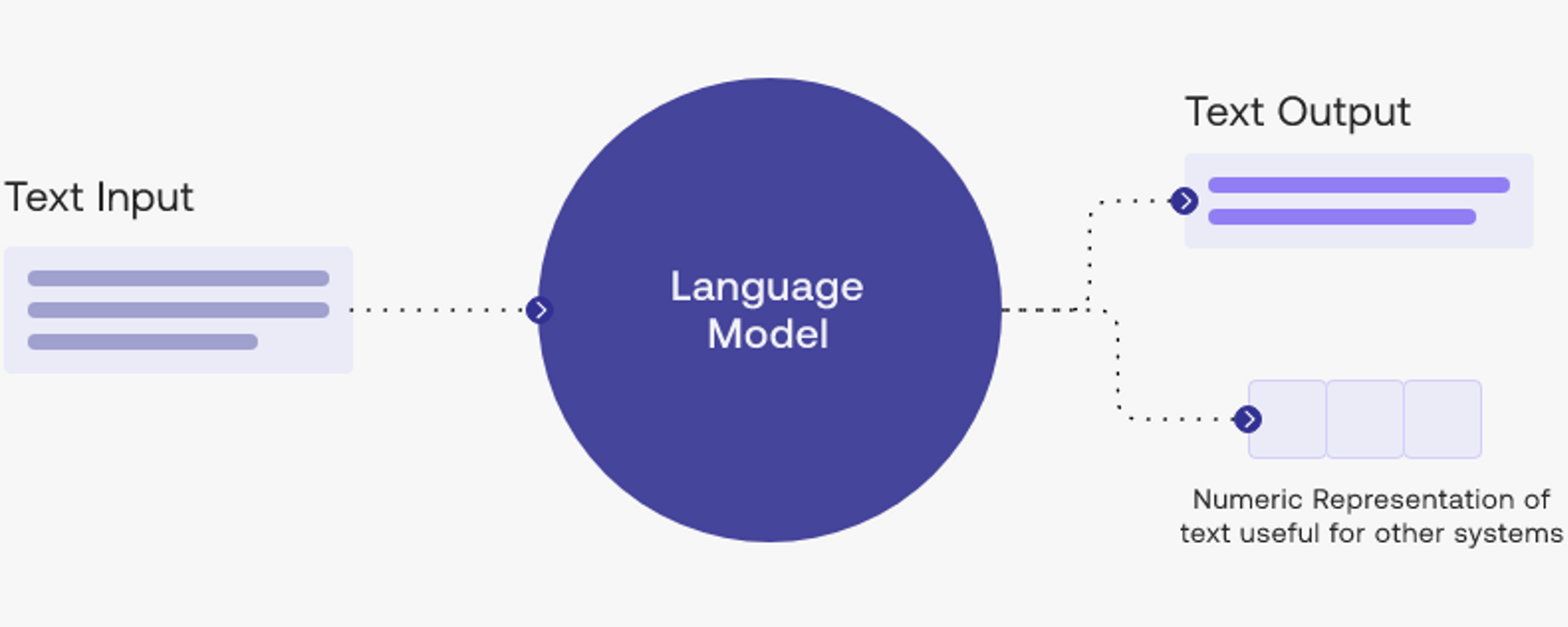

LLMs – such as ChatGPT – make life easier for attackers to phish and socially engineer people. LLMs – a subset of Generative AI – are specifically designed to generate text in response to queries or inputs from us. They’re really good at this already, in some contexts it’s very difficult to differentiate LLM-generated text from actual human writing.

Advice given to us, in the past, to detect email phishing and social engineering attacks has largely focused on reviewing the language in the emails (are words spelled correctly? Is the email written in a grammatically correct way?) and the source of the email. LLMs are making the first part of that advice redundant, virtually irrespective of the language. In the case of a BEC attack, the email is already coming from a trusted, but compromised, address. Even the most cautious of us will react or respond to an email written in the style of the person sending it that’s coming from their email address.

How do we protect ourselves and our businesses from LLM-driven phishing attacks? Well I can tell you that the solution is not going to be shifting the burden to our end users to identify LLM-written content, as was the case with pre-LLM phishing attacks. As with most problems created at scale by new technologies, the solution will need to be technology-driven. I’ll be working on, and following, this space closely in the future.